Always know your assumptions! (Adjusted R^2)Īdjusted R^2 is the same as standard R^2 except that it penalizes models when additional features are added. It is possible to get negative values for R^2 but that would require a fitting procedure other than OLS or non-linear data. R^2 measures how much variance is captured by the model. It is measured simply as the sum of the squared difference between each observation and the target mean. SST is a measure of the variance in the target variable. These anomalous data points are often called outliers, and they can wreak havoc on the performance of your model. There’s a data point or points that deviate from the general trend, causing large squared errors.

This makes sense for some datasets but not others.Ĭonsider the converse, though. It’s like saying, it’s okay to miss on the close points but don’t allow large deviations between the model and the most distant points.

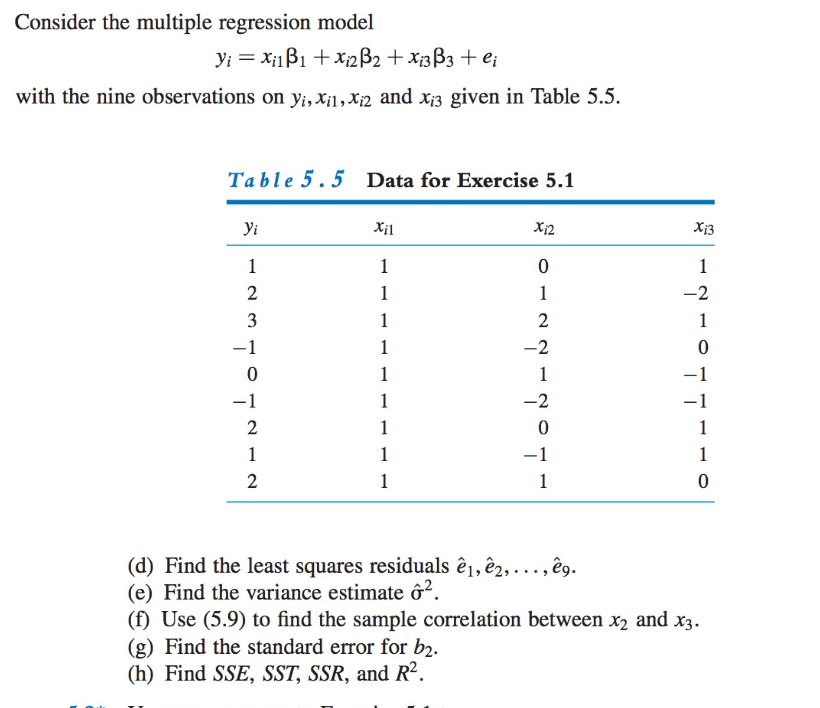

If you think about what squaring does to large numbers, you’ll realize that we’re really penalizing large errors. No matter how you slice it, you end up with error that looks smaller than it is in reality.īut why not use absolute error instead of squared error? If we didn’t square them, then we’d have some positive and some negative values. Why? Because there is always irreducible error that we just can’t get around, unless we’re dealing with some trivial problem.Īnswer: squaring the values makes them all positive. A value of 0 indicates that all predications are spot on. SSE is measure of how far off our model’s predictions are from the observed values. Without further ado, let’s create a class to capture the four key statistics about our data. If not, you should still get the main idea, though some nuances may be lost. I’m going to assume you’re familiar with basic OOP. They’re simply used to calculate adjusted R^2. If df_t and df_e don’t make much sense, don’t worry about it. Lastly, df_t is the degrees of freedom of the estimate of the population variance of the dependent variable and df_e is the degrees of freedom of the estimate of the underlying population error variance. For example, if there are 25 baby weigths, then m equals 25. Here, m represents the total number of observations. Keep in mind that y_i is the observed target value, y-hat_i is the predicted value, and y-bar is the mean value. This trick pops up quite frequently so you should remember it. reshape(-1,1) which creates a fake 2D array for fitting. Lr = LinearRegression(fit_intercept=True)

From sklearn.linear_model import LinearRegression